Running DeepSeek Locally: The Only Safe and Private Way

Artificial Intelligence is evolving at an unprecedented pace, and DeepSeek R1 is the latest model making waves. It’s powerful, efficient, and, most importantly, open-source. This means you can run it on your own computer instead of relying on cloud-based AI services. But is running AI locally truly safe? How do you ensure your data stays private?

In this article, we will explore why running AI models locally is crucial, the best ways to do it, and the safest methods to protect your data while using DeepSeek R1.

To run DeepSeek R1 locally and ensure privacy:

Result: Full AI power, zero data leaks.

Why You Should Run AI Models Locally

1. Privacy Concerns with Cloud-Based AI Services

When you use AI models through online platforms, your data is sent to their servers. This means:

- Your personal conversations, queries, and data are stored on external servers.

- Companies can use your data for analysis, training, or even share it with third parties.

- If the AI provider’s servers are located in certain countries, they may be subject to local cybersecurity laws, allowing authorities access to stored data.

DeepSeek R1 is particularly concerning in this regard because its servers are located in China. Under China’s cybersecurity laws, authorities can request access to any stored data, raising concerns about user privacy.

2. OpenAI and the Rise of DeepSeek R1

DeepSeek R1 has shocked the AI world. It outperforms many top models, including ChatGPT, despite being trained with significantly fewer resources. Unlike OpenAI’s models, which are closed-source and require cloud access, DeepSeek R1 is open-source, allowing users to run it locally without exposing their data to external servers.

3. Local AI Models Offer Full Control

Running AI locally ensures:

- No external access to your data – everything stays on your computer.

- Faster response times – no need to rely on internet speed.

- Full control over customization – tweak models according to your needs.

However, setting up AI models locally requires some technical knowledge, so let’s break down the process step by step.

How to Run DeepSeek R1 Locally

There are two excellent methods to run DeepSeek R1 on your computer:

- LM Studio – Best for beginners with a user-friendly interface.

- Ollama – A powerful CLI-based tool for advanced users.

1. Running DeepSeek R1 with LM Studio

LM Studio is a GUI-based tool that simplifies running AI models locally. Here’s how you can set it up:

Step 1: Download LM Studio

- Visit lmstudio.ai and download the version for your operating system (Windows, macOS, or Linux).

- Install and launch the software.

Step 2: Download a DeepSeek Model

- Open LM Studio and navigate to the “Discover” tab.

- Search for DeepSeek R1 and select a model size based on your hardware capabilities.

- Click Download and wait for the installation to complete.

Step 3: Run the Model Locally

- After installation, load the model in LM Studio.

- Start a conversation by typing your prompt and interacting with the AI.

- Enjoy a fully offline AI experience with no external data sharing.

Hardware Requirements:

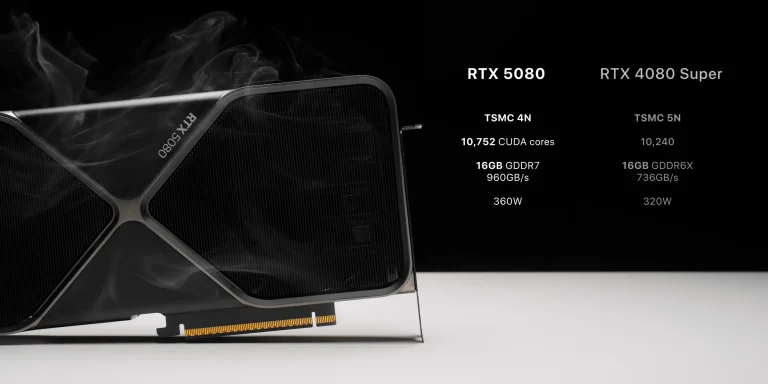

- The larger the model, the more powerful your hardware needs to be.

- Smaller models like 7B or 14B run on most modern computers, while 67B+ models require high-end GPUs (e.g., NVIDIA RTX 4090).

2. Running DeepSeek R1 with Ollama

For users comfortable with command-line interfaces (CLI), Ollama is a great alternative. It is lightweight, efficient, and perfect for those who want more control.

Step 1: Install Ollama

- Visit ollama.ai and download the installer for your OS (Windows, Mac, or Linux).

- Install it and open a terminal (Command Prompt, PowerShell, or Terminal).

Step 2: Download and Run a DeepSeek Model

- In your terminal, run:

ollama pull deepseek-r1This command downloads the DeepSeek R1 model to your local machine.

- To start the AI, use:

ollama run deepseek-r1The model will now run offline without any external internet connection.

Step 3: Verify AI’s Internet Access (Optional but Recommended)

It’s crucial to confirm that the AI model is not secretly connecting to the internet. You can use network monitoring tools like:

- PowerShell script (Windows):

Get-NetTCPConnection | Where-Object { $_.RemoteAddress -ne '0.0.0.0' }This will show any external network connections initiated by the AI.

- Wireshark (Mac/Linux/Windows) – A more advanced network monitoring tool.

After testing, you should see that DeepSeek R1 does not access external servers, ensuring complete privacy.

Enhancing Security: Running AI in a Docker Container

While running AI models locally is a big step towards privacy, there’s always a small risk of system access vulnerabilities. To isolate AI models from the rest of your operating system, Docker provides an extra layer of security.

Why Use Docker?

- Isolates AI from your main OS – Prevents unauthorized file access.

- Limits network permissions – Ensures the AI cannot connect to the internet.

- Optimized GPU performance – Compatible with NVIDIA GPUs on Windows & Linux.

Setting Up Docker for DeepSeek R1

Step 1: Install Docker

- Download and install Docker Desktop from docker.com.

- If using Windows, install Windows Subsystem for Linux (WSL).

Step 2: Run DeepSeek R1 in Docker

- Open a terminal and run the following command:

docker run --gpus all -v llama_settings:/root/.ollama -p 11434:11434 --privileged=false --memory=8g --cpus=4 --read-only ollama run deepseek-r1

- Uses Docker’s GPU access for faster performance.

- Prevents AI from accessing sensitive files.

- Blocks unnecessary network activity.

Step 3: Verify Isolation

- Run a network monitoring check inside Docker to ensure no external data transmission.

- Confirm that DeepSeek R1 is running fully offline.

Final Thoughts: The Future of Local AI

Running AI models like DeepSeek R1 locally is a game-changer. It allows users to harness the power of AI without compromising privacy. By using tools like LM Studio, Ollama, and Docker, you can ensure that your data remains secure while still benefiting from AI’s capabilities.

While the setup process may seem technical, it’s well worth the effort. As AI continues to evolve, local AI models may become the new standard for privacy-conscious users and developers.

Would you try running AI locally? Let us know your thoughts in the comments!